November 2025 Newsletter: AI boosts GDP through infrastructure spend, not yet productivity

AI is lifting GDP, but not the way you expected

Image: Microsoft

Measuring AI’s Impact in Gigawatts, Not Profits

Enterprise AI has yet to deliver widespread financial returns, with a recent Boston Consulting Group study of 1,250 firms finding only 5% are achieving value at scale. This highlights the significant challenge of full AI transformation, as 60% of companies report generating only minimal value from their investments. link

Despite slow enterprise adoption, AI infrastructure spending is a primary driver of economic expansion. Harvard economist Jason Furman calculated that while investment in information-processing technology was just 4% of U.S. GDP in the first half of 2025, it accounted for a staggering 92% of all GDP growth. link

Massive capital commitments for data centers and chips from firms like OpenAI, Nvidia, and Oracle are forcing analysts to revise growth forecasts. McKinsey is already considering raising its aggressive $5.2 trillion five-year global spending estimate. Data centers are now measured by their power demand in gigawatts, a critical constraint. link

Walmart, the second-largest e-commerce player, has partnered with OpenAI to enable shopping directly within ChatGPT through its Instant Checkout feature. Following Etsy and Shopify, the integration aims to create proactive retail experiences, helping “customers anticipate their needs before they do,” according to the company. link

However, a recent study analyzing 973 e-commerce sites found organic LLM traffic underperforms nearly all traditional digital channels. While bounce rates are favorable, key financial metrics like conversion rate and revenue per session lag behind paid and organic search, though performance trends show gradual improvement. link

India, a global leader in business process outsourcing (BPO), is rapidly adopting AI in its call centers. Underscoring this trend, Anthropic announced India is its second-largest market for Claude usage, and Reuters reports Google plans to invest $15 billion in a new AI data center there. BPO Anthropic link

Governance, Risk, and the Labor Market

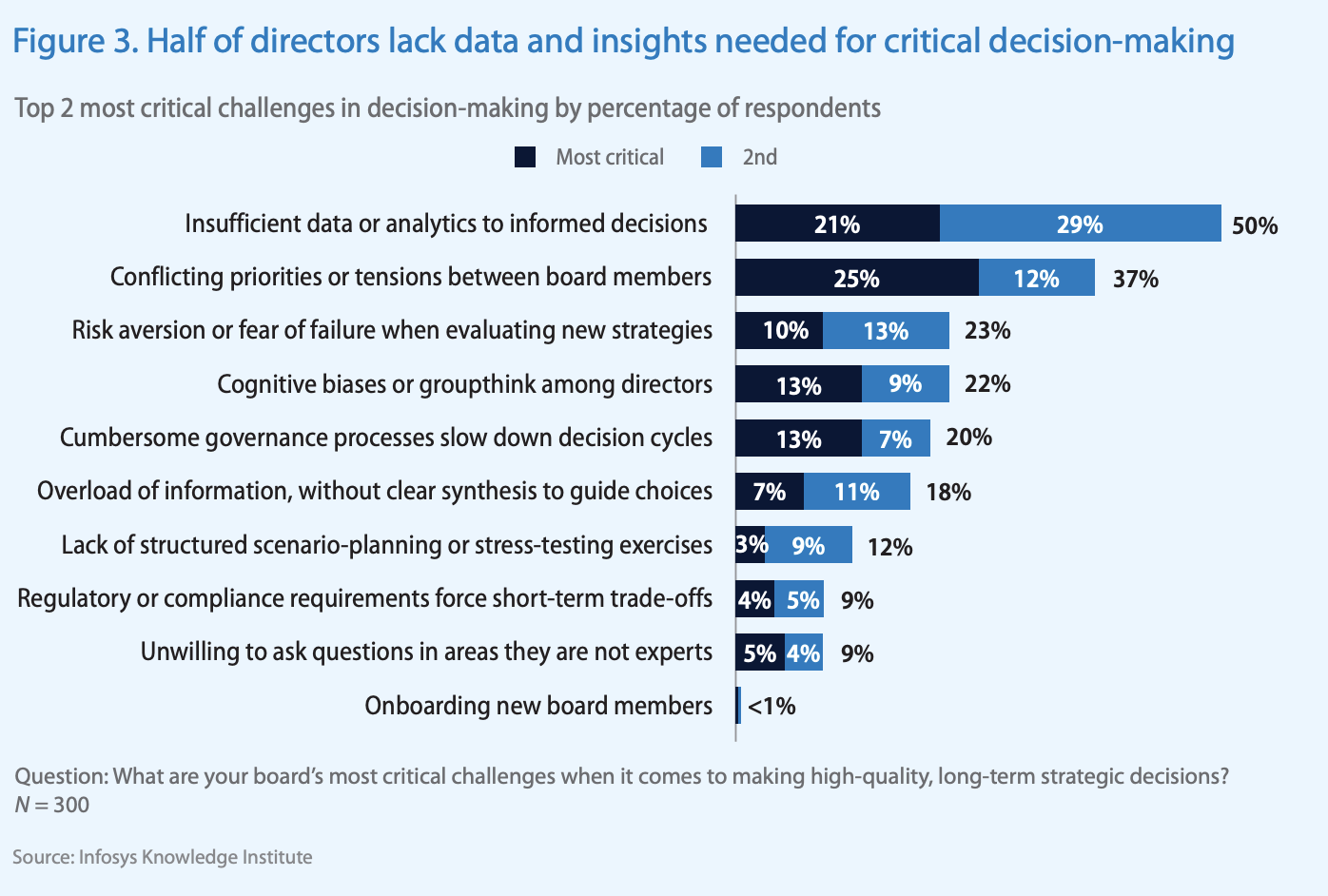

AI is increasing governance pressure on corporate boards. While an Infosys survey found most board members feel current on AI technology, 50% report lacking sufficient data or analytics for informed decision-making. This gap highlights the growing complexity of oversight in an era of automated, agent-based actions. link

BOD's sufficient data and analysis for decision-making Image: Infosys

The risks of inadequate governance were highlighted when Deloitte issued a partial refund to the Albanese government. According to The Guardian, the firm was forced to pay back a portion of its fee after a report it delivered was found to contain AI-generated “hallucinations” and incorrect references. link

Contrary to widespread disruption fears, Yale’s Budget Lab found no discernible evidence of AI-driven job losses since ChatGPT’s release. The researchers argue that major technological shifts historically take decades, not months, to materialize as widespread changes in the labor market, a pattern they expect to continue. link

In contrast, Amazon’s warehouse automation plans are accelerating. The New York Times reports the company expects robotics to help it avoid hiring 160,000 workers by 2027 and over 600,000 by 2033. This investment is projected to save approximately 30 cents per package fulfilled. link

Building a Secure Future for AI Agents

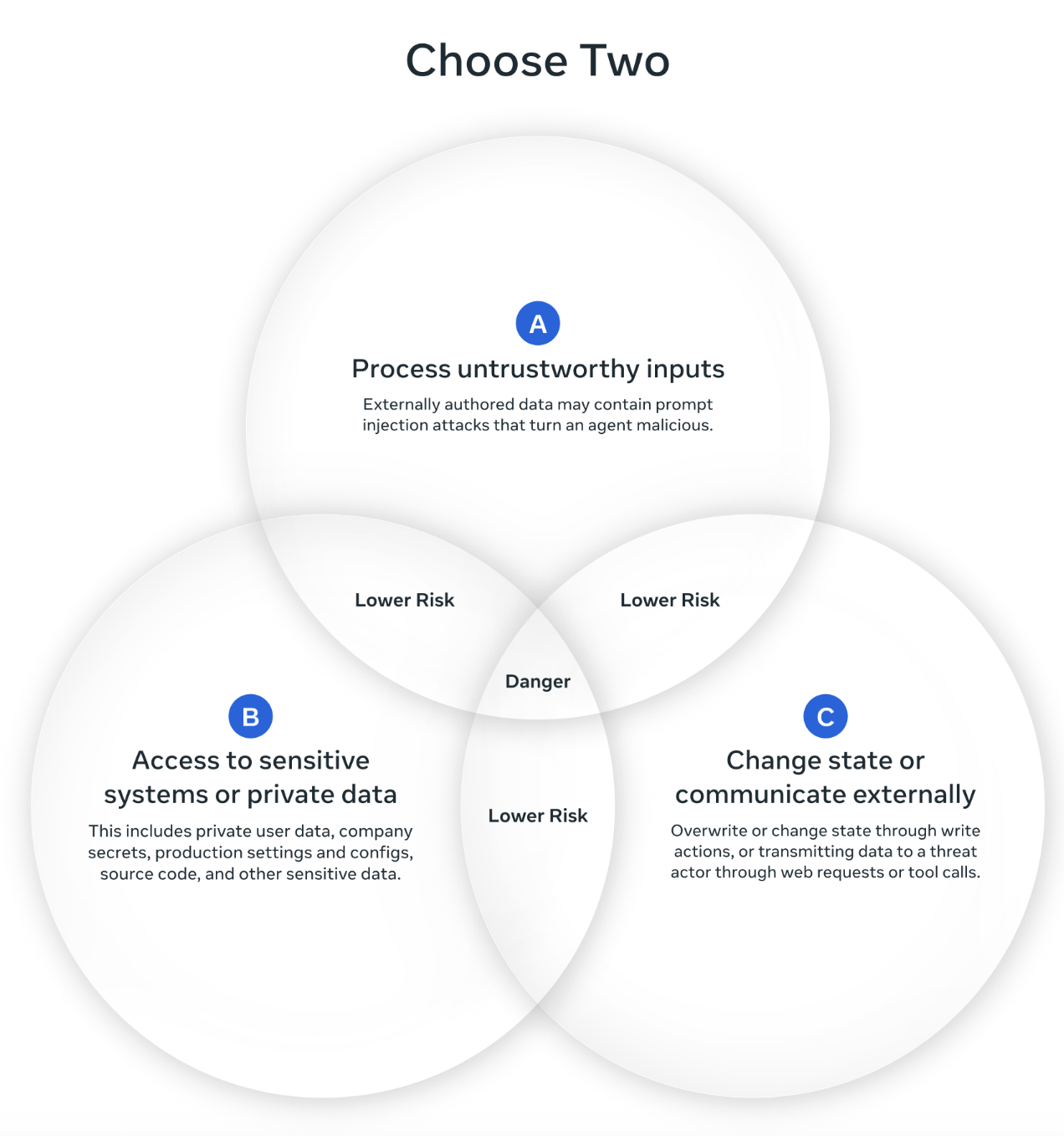

Pick two for higher security. Image: Meta

As AI agents become more autonomous, security frameworks are critical. Meta AI proposed an “Agents Rule of Two,” stating that to mitigate risk, an agent should only possess two of three key capabilities at once: processing untrusted inputs, accessing sensitive data, or acting on the external world.

To address similar security concerns in development, Anthropic is designing tools that run agentic code in isolated environments. These “sandboxes” impose strict network and file-system restrictions, preventing autonomous agents from performing unintended or harmful actions while completing coding tasks and using other tools. link