Golden Evaluation Sets: Enterprise AI’s Missing Feedback Loop

AI moves fastest in software engineering for a structural reason: the model tests its own output instantly. Write code, run it, see if it breaks, iterate in seconds. The feedback loop is nearly frictionless.

Source: Ken Calhoon

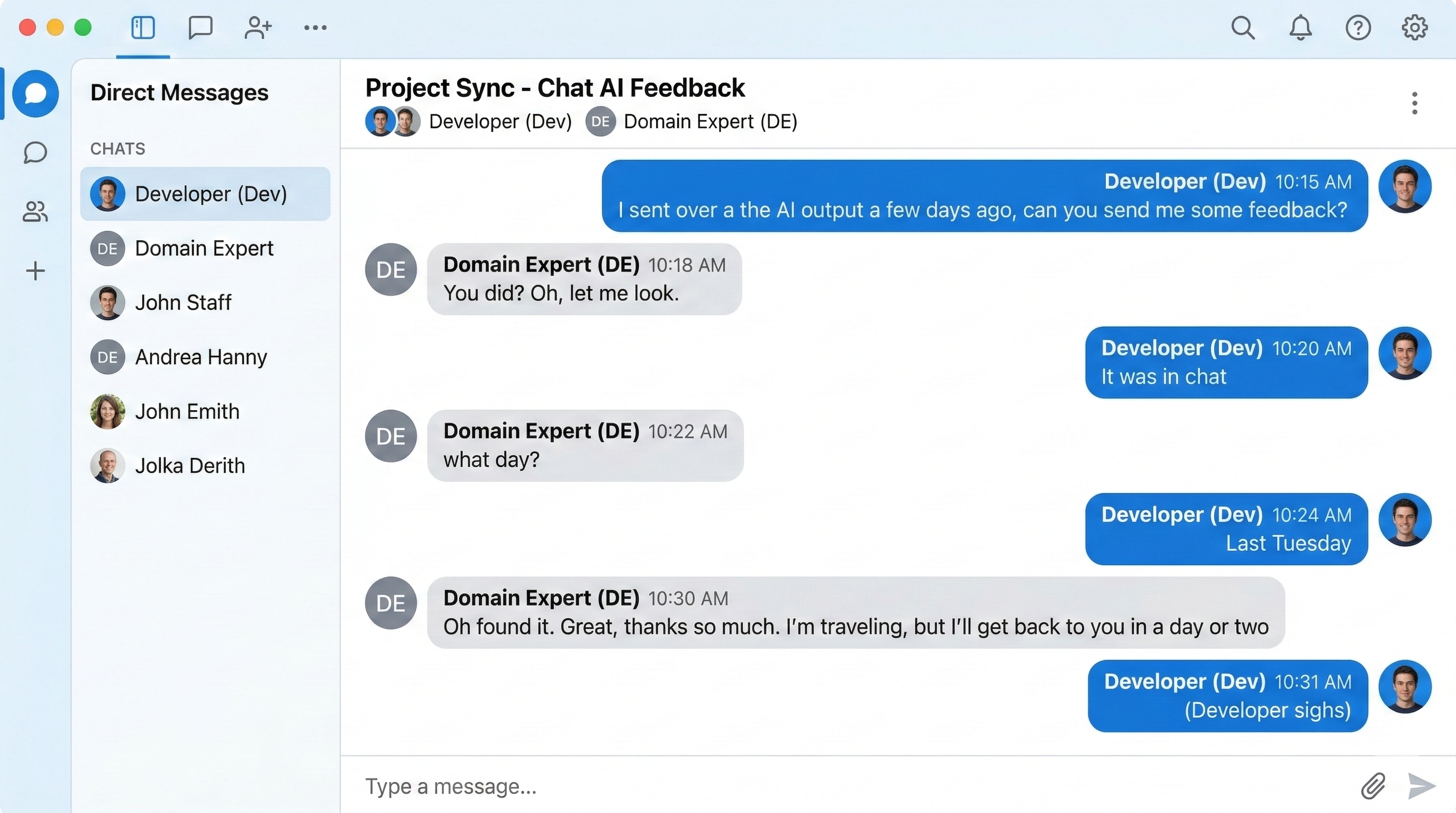

Now consider what happens when AI moves into knowledge retrieval, invoice matching, or warranty validation. There is no compiler. No automatic pass/fail. The default iteration cycle becomes: developers tweak the system, sample outputs get generated, domain experts review them days later, and the cycle restarts. That loop is brutally slow — and the further AI scales beyond IT, the more consequential this drag becomes.

The fix is a golden evaluation set built before development begins: 50 to 100 examples covering standard flows and realistic edge cases, with expected inputs and desired outputs defined for substance, format, language, and latency. This creates a synthetic feedback loop that replicates the speed advantage coding environments enjoy natively.

The quality benefit is obvious. The speed benefit is routinely overlooked. With automated evaluation in place, developers receive immediate pass/fail signals on every iteration. Refinement cycles compress from days to minutes.

The catch is real: domain experts must invest meaningful time before a single line of code is written. That feels counterintuitive under pressure to start building. But hours of upfront definition save weeks of slow, reactive review cycles. The math is unambiguous.

Leaders should make this a hard prerequisite: no use case enters development without a golden test set and a documented workflow or SOP. The upfront discipline is not a brake on momentum — it is what makes downstream velocity possible.